In linear algebra, the Cayley–Hamilton theorem (named after the mathematicians Arthur Cayley and William Hamilton) states that every square matrix over a commutative ring (such as the real or complex field) satisfies its own characteristic equation.

More precisely:

If A is a given n×n matrix and In is the n×n identity matrix, then the characteristic polynomial of A is defined as

where "det" is the determinant operation. Since the entries of the matrix are (linear or constant) polynomials in λ, the determinant is also a polynomial in λ. The Cayley–Hamilton theorem states that "substituting" the matrix A for λ in this polynomial results in the zero matrix:

The powers of λ that have become powers of A by the substitution should be computed by repeated matrix multiplication, and the constant term should be multiplied by the identity matrix (the zeroth power of A) so that it can be added to the other terms. The theorem allows An to be expressed as a linear combination of the lower matrix powers of A.

When the ring is a field, the Cayley–Hamilton theorem is equivalent to the statement that the minimal polynomial of a square matrix divides its characteristic polynomial.

Example

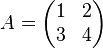

As a concrete example, let

.

.

Its characteristic polynomial is given by

The Cayley–Hamilton theorem claims that, if we define

- p(X) = X2 − 5X − 2I2,

then

which one can verify easily.

A direct algebraic proof

This proof uses just the kind of objects needed to formulate the Cayley–Hamilton theorem: matrices with polynomials as entries. The matrix tIn − A whose determinant is the characteristic polynomial of A is such a matrix, and since polynomials form a commutative ring, it has an adjugate

Then according to the right hand fundamental relation of the adjugate one has

Since B is also a matrix with polynomials in t as entries, one can for each i collect the coefficients of ti in each entry to form a matrix Bi of numbers, such that one has

(the way the entries of B are defined makes clear that no powers higher than tn − 1 occur). While this looks like a polynomial with matrices as coefficients, we shall not consider such a notion; it is just a way to write a matrix with polynomial entries as linear combination of constant matrices, and the coefficient ti has been written to the left of the matrix to stress this point of view. Now one can expand the matrix product in our equation by bilinearity

Writing  , one obtains an equality of two matrices with polynomial entries, written as linear combinations of constant matrices with powers of t as coefficients. Such an equality can hold only if in any matrix position the entry that is multiplied by a given power ti is the same on both sides; it follows that the constant matrices with coefficient ti in both expressions must be equal. Writing these equations for i from n down to 0 one finds

, one obtains an equality of two matrices with polynomial entries, written as linear combinations of constant matrices with powers of t as coefficients. Such an equality can hold only if in any matrix position the entry that is multiplied by a given power ti is the same on both sides; it follows that the constant matrices with coefficient ti in both expressions must be equal. Writing these equations for i from n down to 0 one finds

, one obtains an equality of two matrices with polynomial entries, written as linear combinations of constant matrices with powers of t as coefficients. Such an equality can hold only if in any matrix position the entry that is multiplied by a given power ti is the same on both sides; it follows that the constant matrices with coefficient ti in both expressions must be equal. Writing these equations for i from n down to 0 one finds

, one obtains an equality of two matrices with polynomial entries, written as linear combinations of constant matrices with powers of t as coefficients. Such an equality can hold only if in any matrix position the entry that is multiplied by a given power ti is the same on both sides; it follows that the constant matrices with coefficient ti in both expressions must be equal. Writing these equations for i from n down to 0 one finds

We multiply the equation of the coefficients of ti from the left by Ai, and sum up; the left-hand sides form a telescoping sum and cancel completely, which results in the equation

This completes the proof.

No comments:

Post a Comment